Production Preparation Processes

We have several processes and tools within the Zivid SDK to help you set up your system and prepare it for production. The essential ones that we advise doing before deploying are:

warm-up

infield correction

hand-eye calibration

Using these processes, we want to imitate the production conditions to optimize the camera for working temperature, distance, and FOV. Other tools that can help you reduce the processing time during production and hence maximize your picking rate are:

transforming and ROI box filtering

downsampling

Robot Calibration

Before starting production, you should make sure your robot is well calibrated, demonstrating good positioning accuracy. For more information about robot kinematic calibration and robot mastering/zeroing, contact your robot supplier.

Warm-up

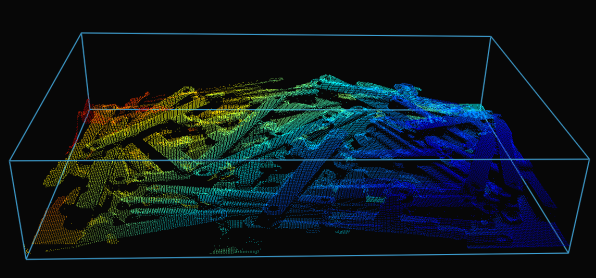

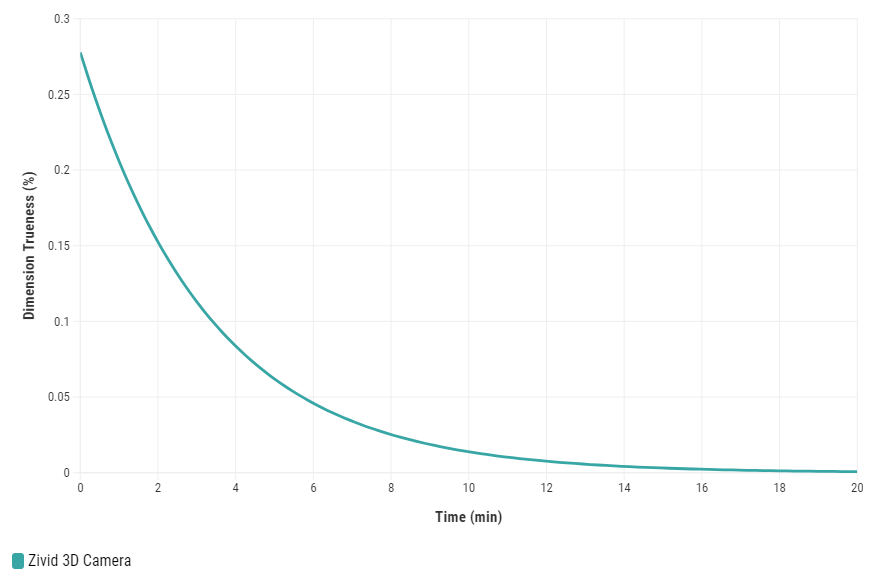

Allowing the Zivid 3D camera to warm up and reach thermal equilibrium can improve the overall accuracy and success of an application. This is recommended if the application requires tight tolerances, e.g., picking applications with < 5 mm tolerance per meter distance from the camera. A warmed-up camera will improve both infield correction and hand-eye calibration results.

To warm up your camera, you can run our code sample, providing cycle time and path to your camera settings.

Sample: warmup.py

python /path/to/warmup.py --settings-path /path/to/settings.yml --capture-cycle 6.0

To understand better why warm-up is needed and how to perform it with SDK, read our Warm-up article.

Tip

If your camera encounters prolonged periods without capturing that last longer than 10 minutes, it is highly beneficial to keep Thermal Stabilization enabled.

Infield Correction

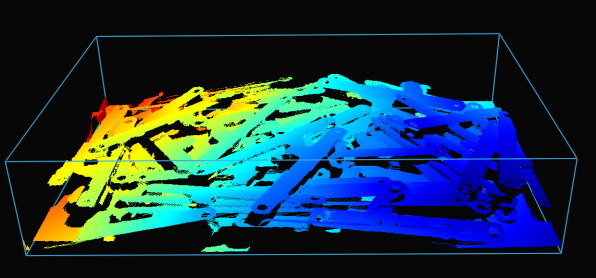

Infield correction is a maintenance tool designed to verify and correct the dimension trueness of Zivid cameras. The user can check the dimension trueness of the point cloud at different points in the field of view (FOV) and determine if it is acceptable for their application. If the verification shows the camera is not sufficiently accurate for the application, then a correction can be performed to increase the dimension trueness of the point cloud. The average dimension trueness error from multiple measurements is expected to be close to zero (<0.1%).

Why is this necessary?

Our cameras are made to withstand industrial working environments and continue to return quality point clouds. However, like most high precision electronic instruments, sometimes they might need a little adjustment to make sure they stay at their best performance. When a camera experiences substantial changes in its environment or heavy handling it could require a correction to work optimally in its new setting.

Read more about Infield Correction if this is your first time doing it.

When running infield verification, ensure that the dimensional trueness is good at both the top and bottom of the bin. For on-arm applications, move the robot to the capture pose when doing infield verification. If the verification results are good, you don’t need to run infield correction.

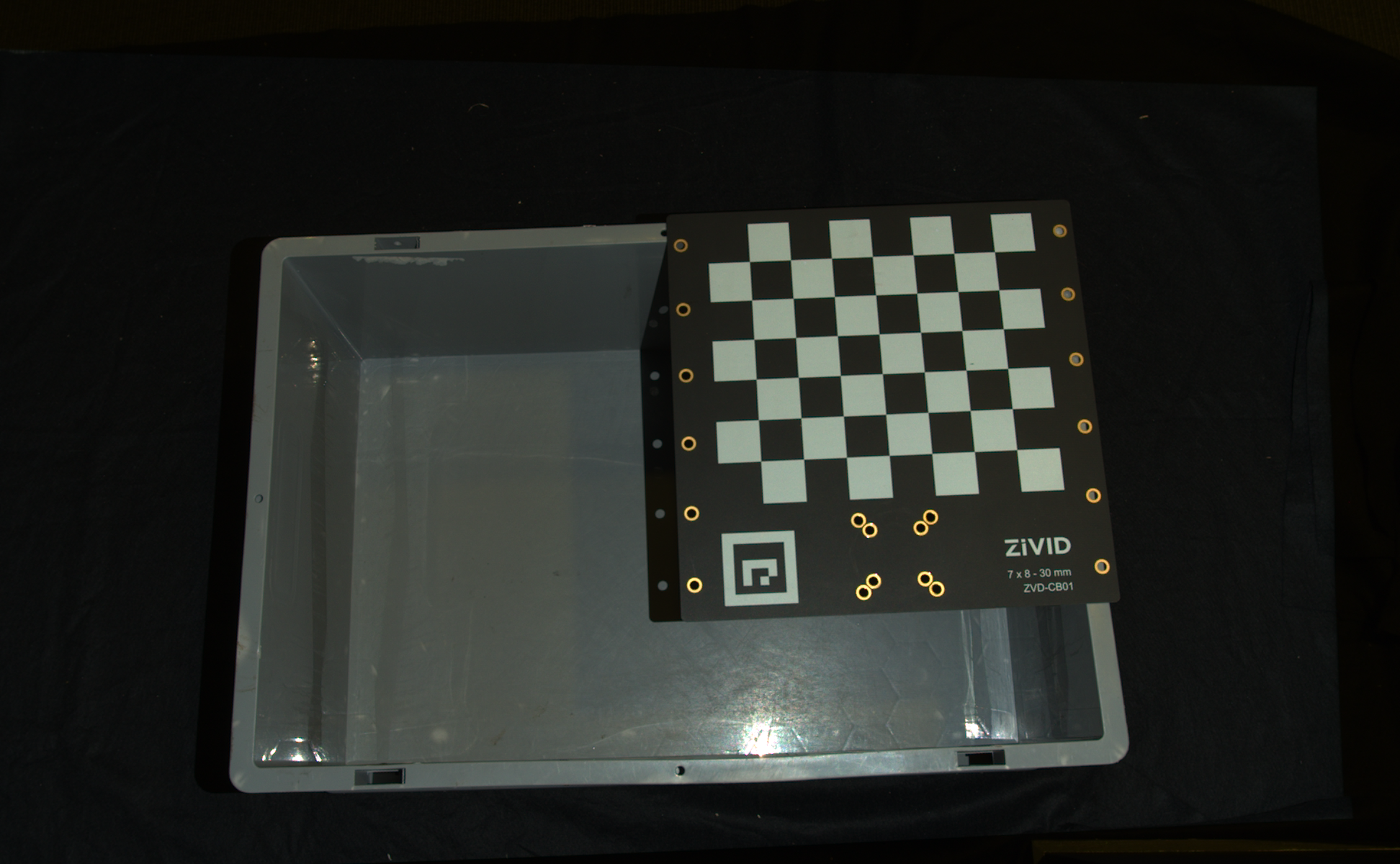

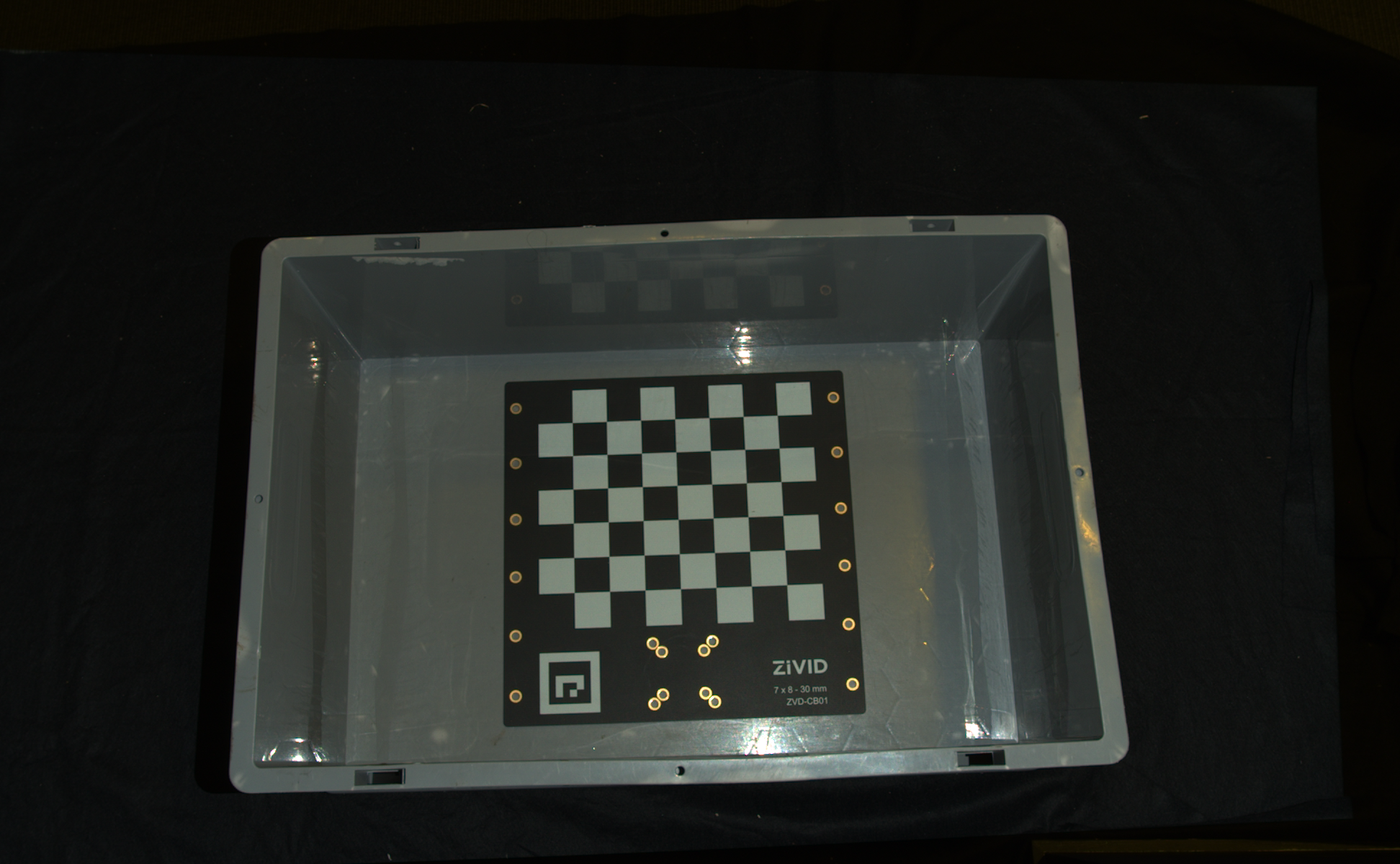

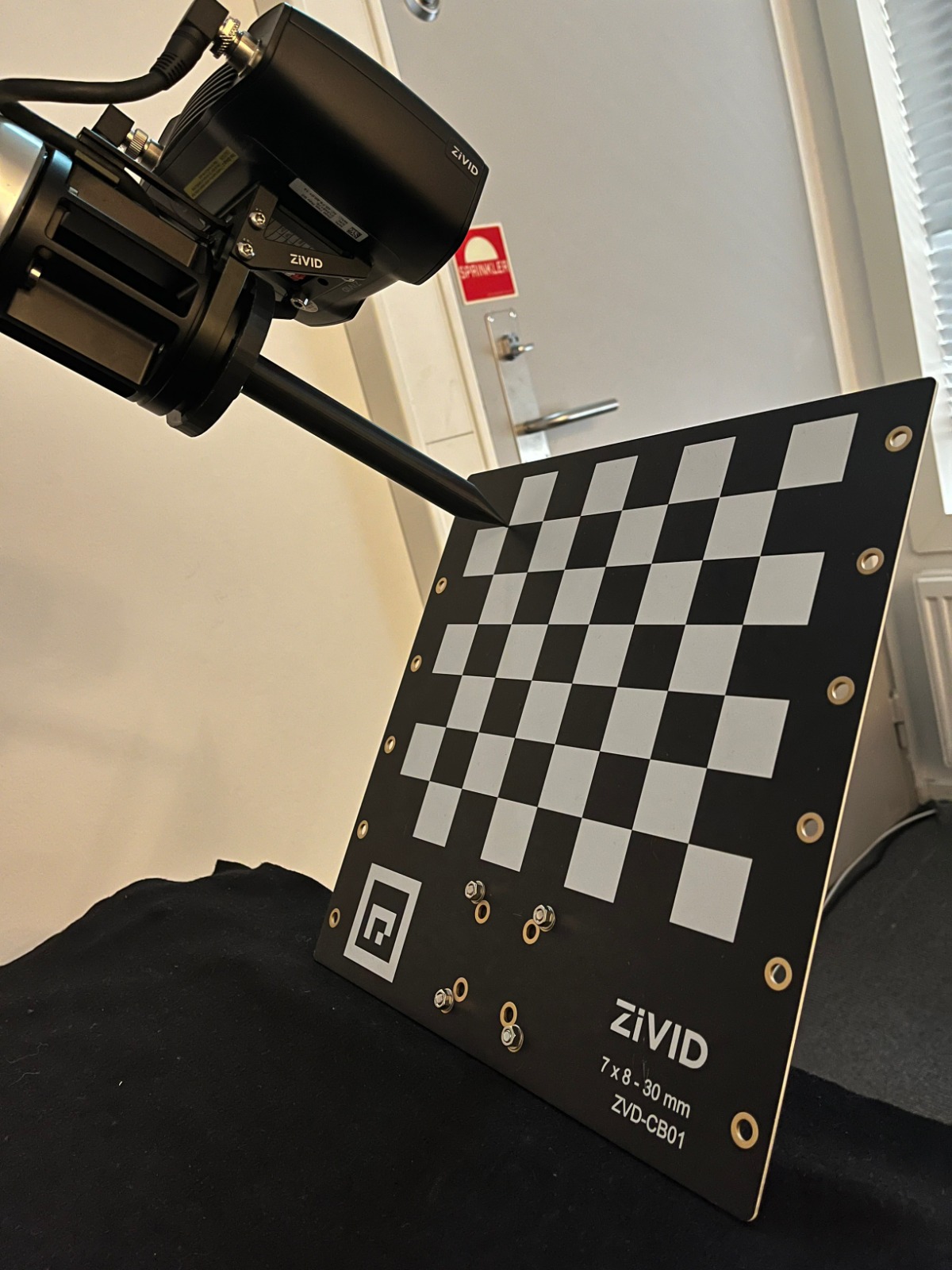

When infield correction is required, span the entire working volume for optimal results. This means placing the Zivid calibration board in multiple locations for a given distance and doing that at different distances while ensuring the entire board is in FOV. A couple of locations at the bottom and a couple at the top of the bin are sufficient for bin picking. Check Guidelines for Performing Infield Correction for more details.

To run infield verification and/or correction on a camera you can use:

Zivid Studio

CLI tool

SDK

Tip

If you are running Infield correction for the first time, we recommend using Zivid Studio.

Check out Running Infield Correction for step-by-step guide on running Infield correction.

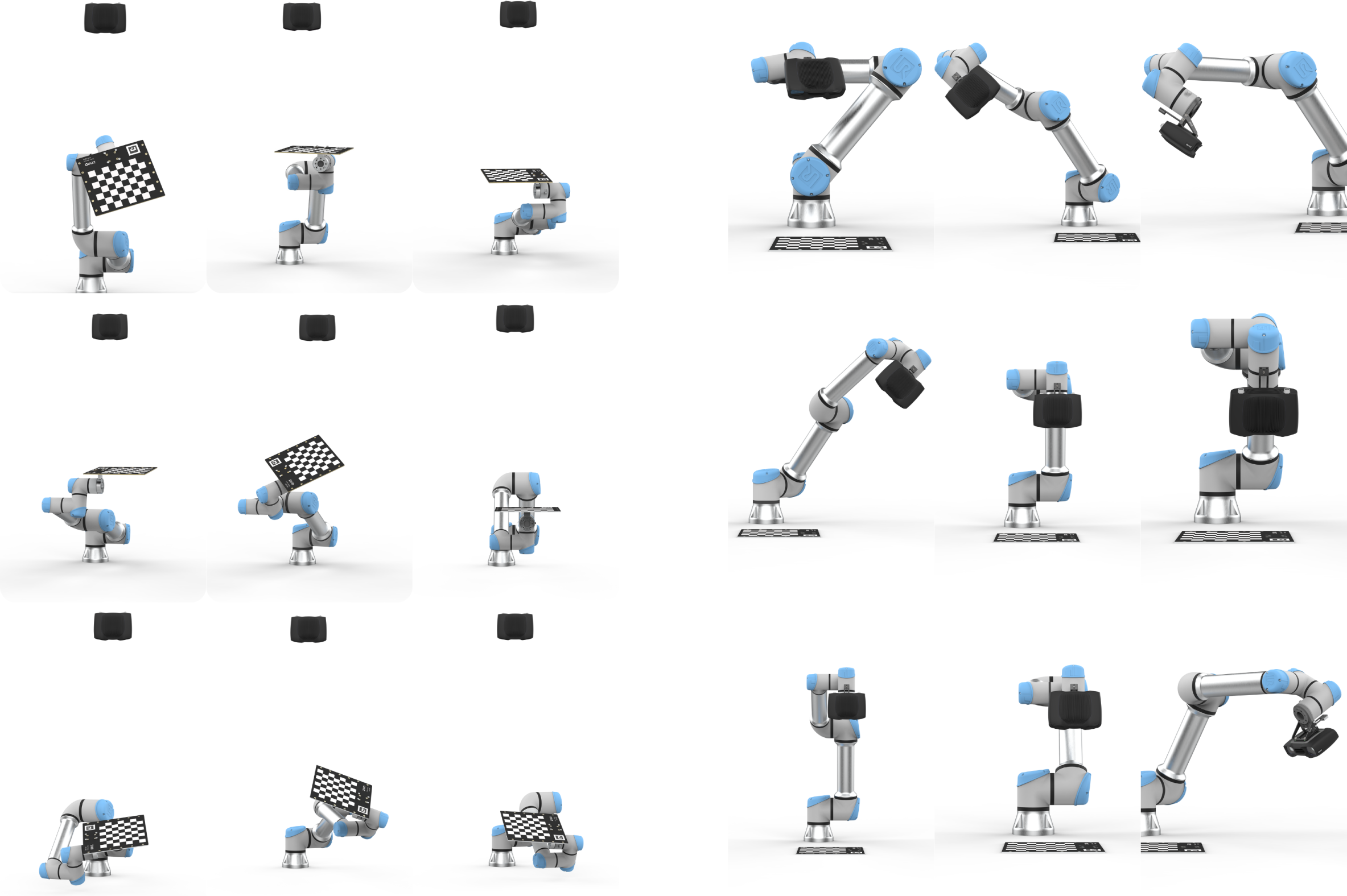

Hand-Eye Calibration

In the table below, you can find our recommendations on which hand-eye calibration tool to use based on your goals and requirements. For a detailed analysis of the tools and their features, see How to run and integrate Zivid Hand-Eye Calibration.

Goal |

Recommended Tool |

|---|---|

Guided, no-code workflow |

|

Minimal integration example |

|

Existing dataset |

|

Any robot |

|

UR robot |

After completing hand-eye calibration, you may want to verify that the resulting transformation matrix is correct and within the accuracy requirements. Our recommendation is to perform the touch test with the robot, which is a physical test that requires a verification end-of-arm tooling. For a detailed walkthrough, see check the tutorial: Any Robot + RoboDK + Python: Verify Hand-Eye Calibration Result via Touch Test.

Alternative options are provided on How to Verify Hand-Eye Calibration page.

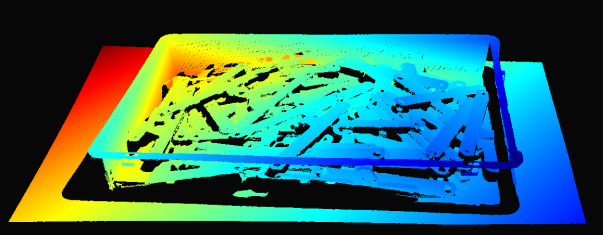

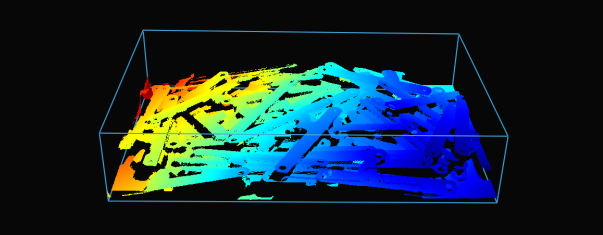

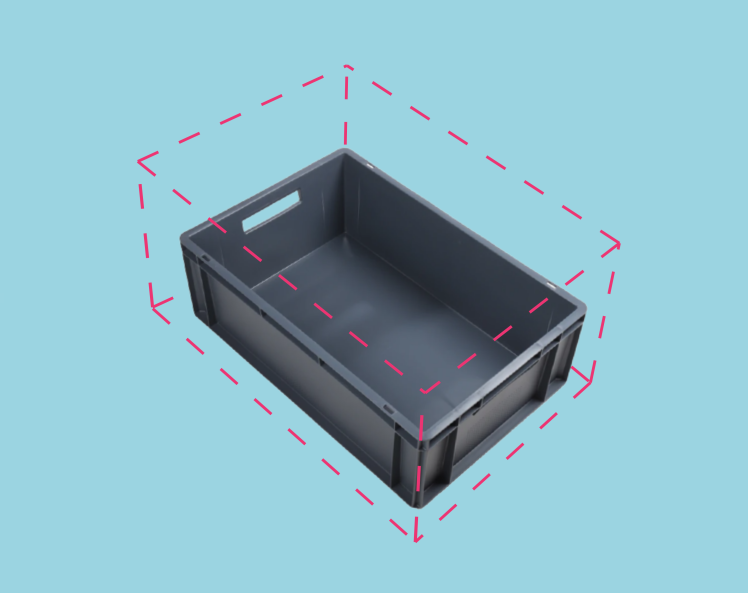

ROI Box Filtering

The camera field of view is often larger than our Region of Interest (ROI), e.g., a bin. To optimize data processing, we can set an ROI box around the bin and crop the point cloud accordingly, capturing only the bin contents. ROI box filtering not only decreases the capture time but also reduces the number of data points used by the algorithm, consequently enhancing its speed and efficiency.

Tip

Smaller point clouds are faster to capture, and can make the detection faster and total picking cycle times shorter.

For an implementation example, check out ROI Box via Checkerboard. This tutorial demonstrates how to filter the point cloud using the Zivid calibration board based on a ROI box given relative to the checkerboard.

Example and Performance

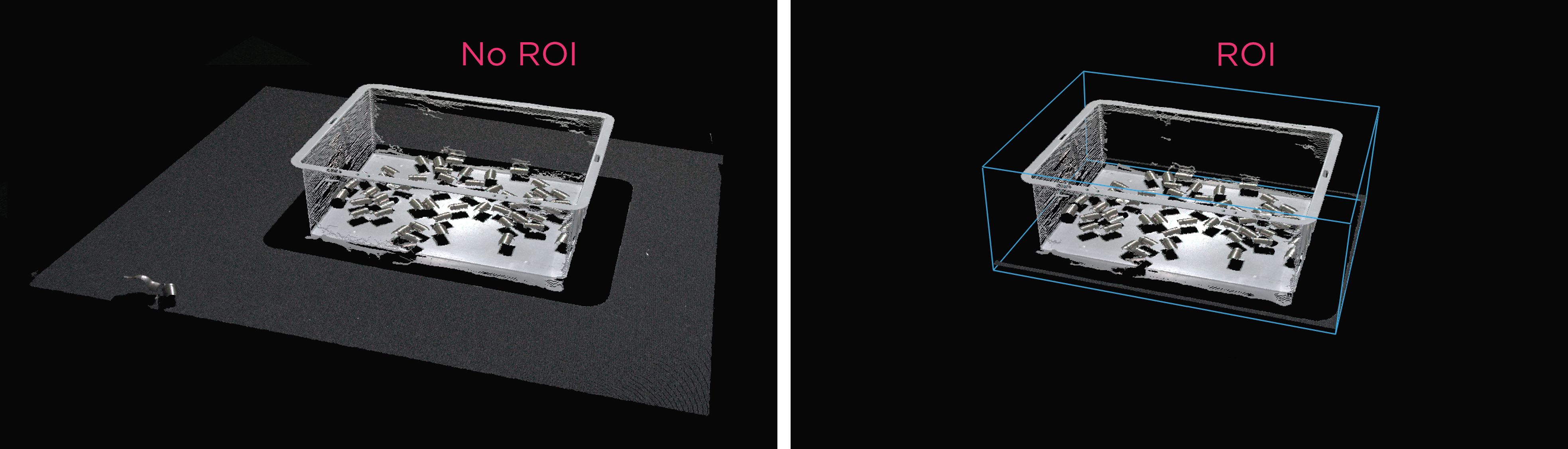

Here is an example of heavy point cloud processing using a low-end PC (Intel Iris Xe integrated laptop GPU and a 1G network card) where ROI box filtering is beneficial.

Imagine a robot picking from a 600 x 400 x 300 mm bin with a stationary-mounted Zivid 2+ MR130 camera. For good robot clearance, the camera is mounted at 1700 mm distance from the bin top, providing a FOV of approximately 1000 x 800 mm. With the camera mounted at this distance, a lot of the FOV is outside of the ROI, allowing one to crop ~20/25% of pixel columns/rows from each side. As can be seen from the table below, the capture time can be significantly reduced with ROI box filtering.

Presets |

Acquisition Time |

Capture Time |

||

|---|---|---|---|---|

No ROI |

ROI |

No ROI |

ROI |

|

Manufacturing Specular |

0.64 s |

0.64 s |

2.1 s |

1.0 s |

Downsampling

Note

Choosing a preset includes choice of downsampling. However, in order to understand what this means, please refer to Sampling (3D). This article also helps understand what downsampling settings to use in case a preset is not chosen.

Some applications do not require high-density point cloud data. Examples are box detection by fitting a plane to the box surface and CAD matching, where the object has distinct and easily identifiable features. In addition, this amount of data is often too large for machine vision algorithms to process with the speed required by the application. It is such applications where point cloud downsampling comes into play.

Downsampling in point cloud context is the reduction in spatial resolution while keeping the same 3D representation. It is typically used to transform the data to a more manageable size and thus reduce the storage and processing requirements.

There are two ways to downsample a point cloud with Zivid SDK:

Via the setting

Settings::Processing::Resampling(Resampling), which means it’s controlled via the capture settings.Via the API

PointCloud::downsample.

In both cases the same operation is applied to the point cloud. Via the API version you can choose to downsample in-place or get a new point cloud instance with the downsampled data.

Downsampling can be done in-place, which modifies the current point cloud.

It is also possible to get the downsampled point cloud as a new point cloud instance, which does not alter the existing point cloud.

Zivid SDK supports the following downsampling rates: by2x2, by3x3, and by4x4, with the possibility to perform downsampling multiple times.

To downsample a point cloud, you can run our code sample, or you can skip it now, and do it as part of the next step of this tutorial.

Sample: downsample.py

python /path/to/downsample.py --zdf-path /path/to/file.zdf

If you don’t have a ZDF file, you can run the following code sample. It saves a Zivid point cloud captured with your settings to file.

Sample: capture_with_settings_from_yml

python /path/to/capture_with_settings_from_yml.py --settings-path /path/to/settings.yml

To read more about downsampling, go to Downsample.

Congratulations! You have covered everything on the Zivid side to be ready to put your bin picking system into production. The following section is Maintenance which covers specific processes we advise carrying out to ensure that the bin picking cell is stable with minimum downtime.

Version History

SDK |

Changes |

|---|---|

2.12.0 |

Added section to demonstrate how ROI box filtering provides a speed-up in capture time.

Downsampling can now also be done via |

2.10.0 |

Monochrome Capture introduces a faster alternative to Downsampling. |