Hand-Eye Calibration Process

To gather the data required to perform the calibration involves the following steps:

Robot executes a series of sufficiently distinct movements (either human-operated or automatically planned).

After each movement, the camera captures an image of the calibration object.

The calibration object pose is extracted from the camera image.

The corresponding robot pose is register from the robot controller.

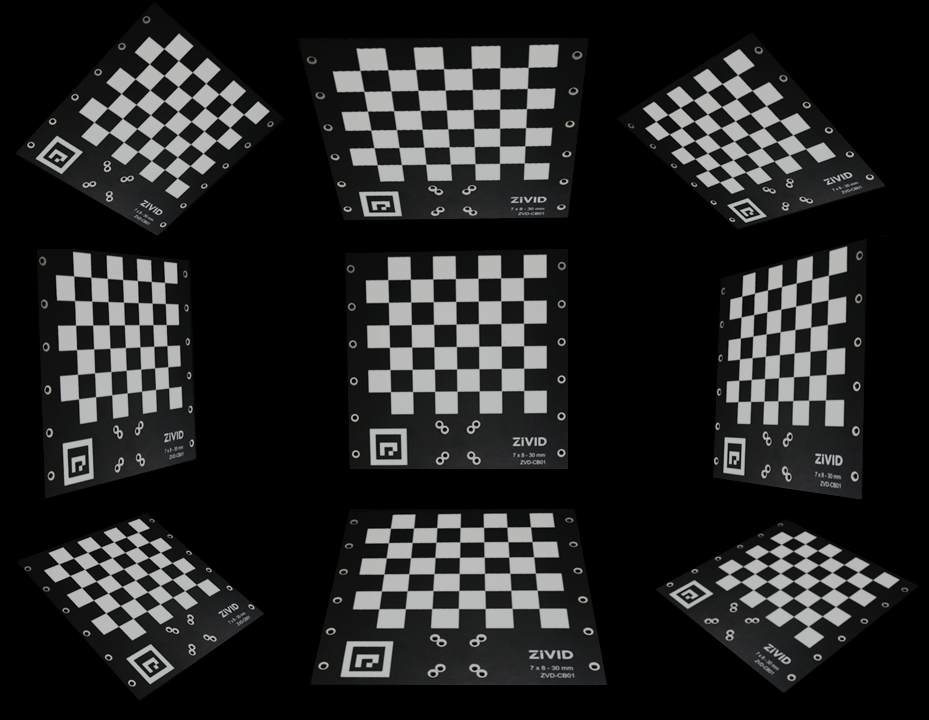

The images below illustrate the dataset of imaging poses and calibration object images for eye-to-hand and eye-in-hand systems.

The images below illustrate the calibration object as seen by the camera.

The task of the hand-eye calibration algorithm is then to solve homogeneous transformation equations to estimate the rotational and translational components of the locations of the calibration object and those of the hand-eye transformation. Detailed mathematical explanation can be found in Hand-Eye Calibration Solution.

Continue reading about Hand-Eye Calibration Residuals.