Working Distance and Camera Positioning

Introduction

In this tutorial, we will learn how to position the camera correctly. We will cover different considerations such as FOV and working distance, and how it affects for example precision and spatial resolution. For physical mounting, check out available Zivid mounts.

Find the right working distance

In many applications the camera will be stationary mounted to capture point clouds with a constant FOV. In other applications, the camera may be mounted on the robot arm, which yields a lot more flexibility on how the camera can be positioned to capture good point clouds. In both alternatives, the camera needs to be positioned such that it is optically optimized for a given scene.

When finding the correct working distance, there are a few things to take into consideration:

What is the area or volume that the camera needs to see?

What is the size of the objects that the camera needs to see?

What is the required spatial resolution and precision in the working area or volume?

Once we know the answers to these questions, we can check if the Zivid camera satisfies the requirements with the following tools:

These tools give theoretical values based on factory verification procedures, but manual validation by capturing and inspecting a point cloud using Zivid Studio is also an option.

Caution

Be aware that the noise is proportional to the working distance, and that the spatial resolution is inversely proportional to the working distance.

We may proceed if all requirements for working distance, FOV, resolution and precision can be satisfied with a single camera position. If not, we need to consider using robot mounting, multiple cameras and so on. In that case, we recommend that you contact customersuccess@zivid.com and we will help you find a solution.

Position the camera

Mounting the camera straight above the scene is most common and recommend.

It is especially beneficial for imaging transparent objects and large surfaces that are extremely specular, and in particular, also dark. Here you will benefit from mounting the camera perpendicular to the object to maximize the signal back to the camera.

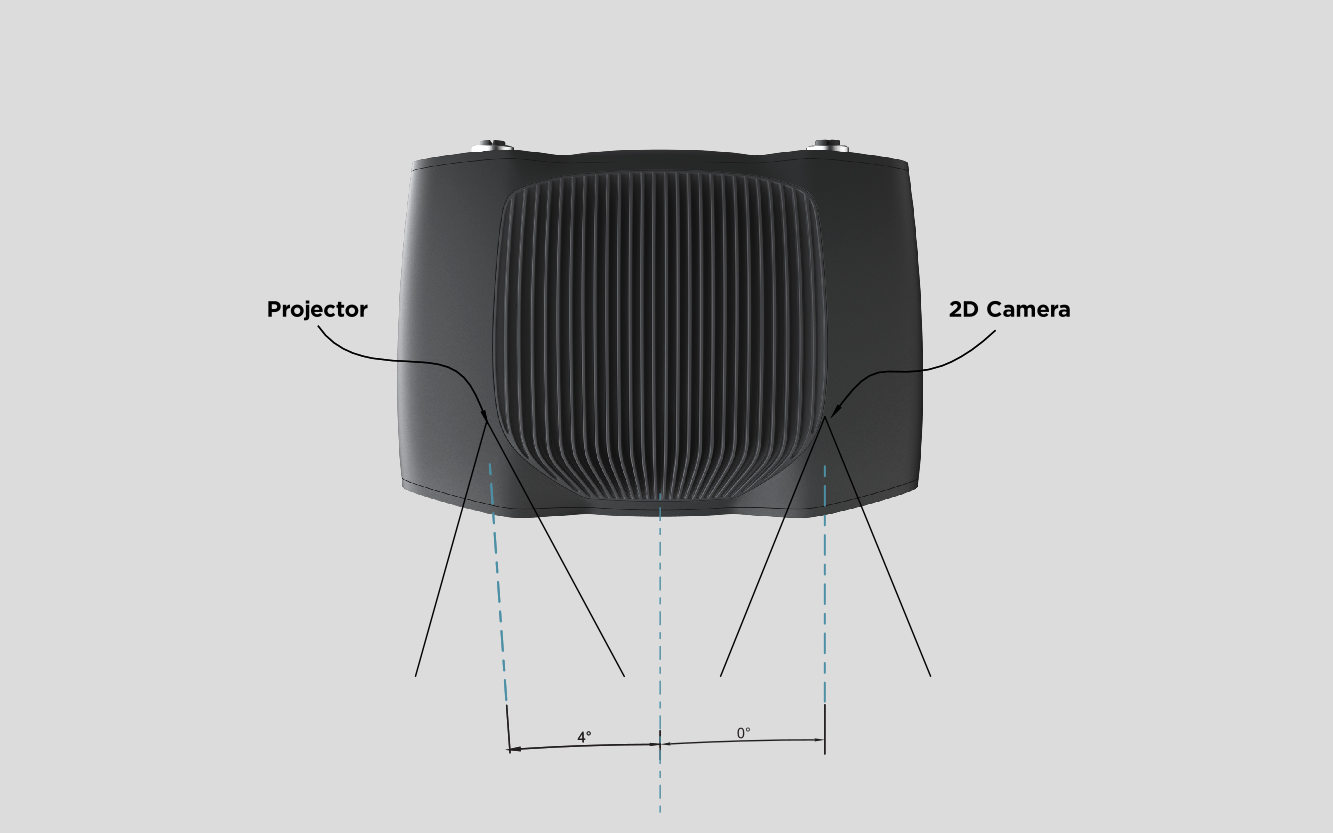

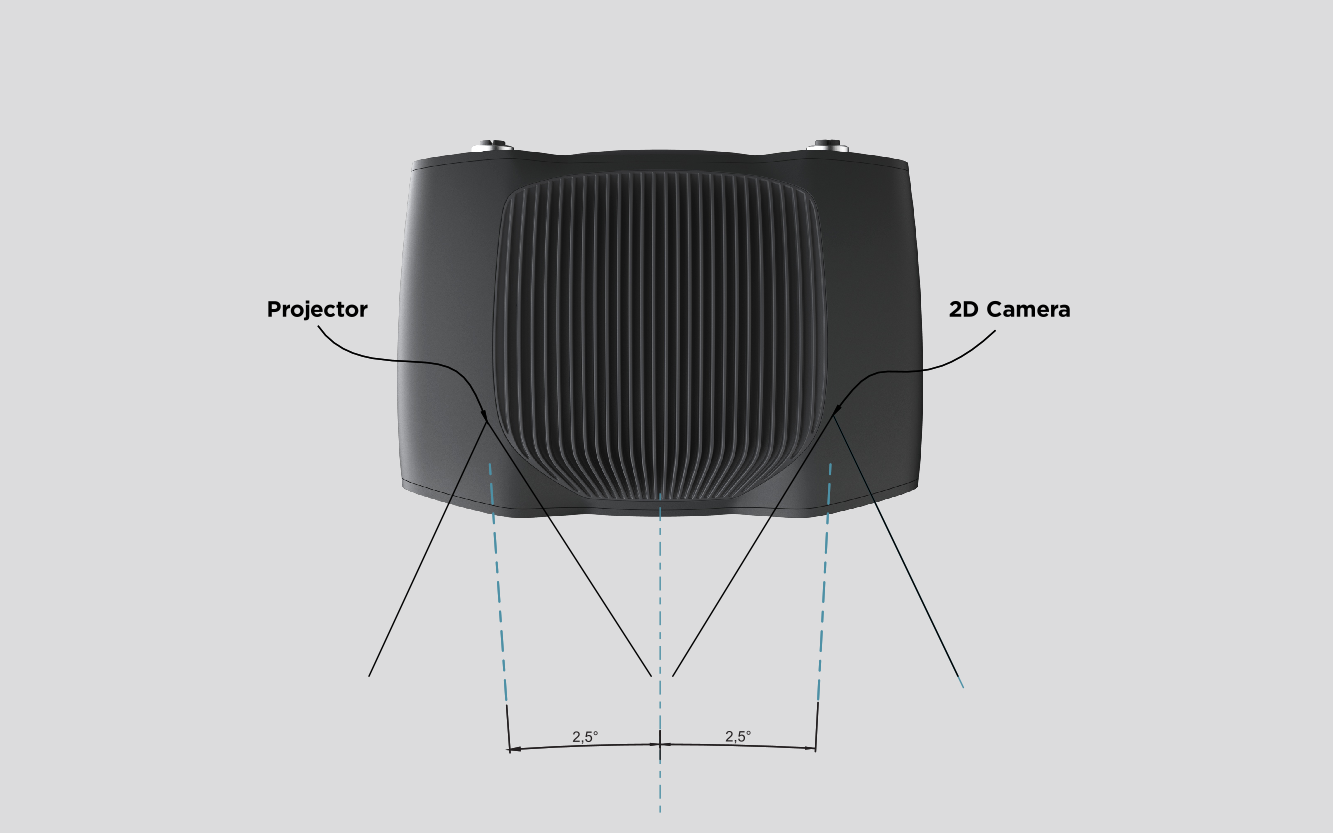

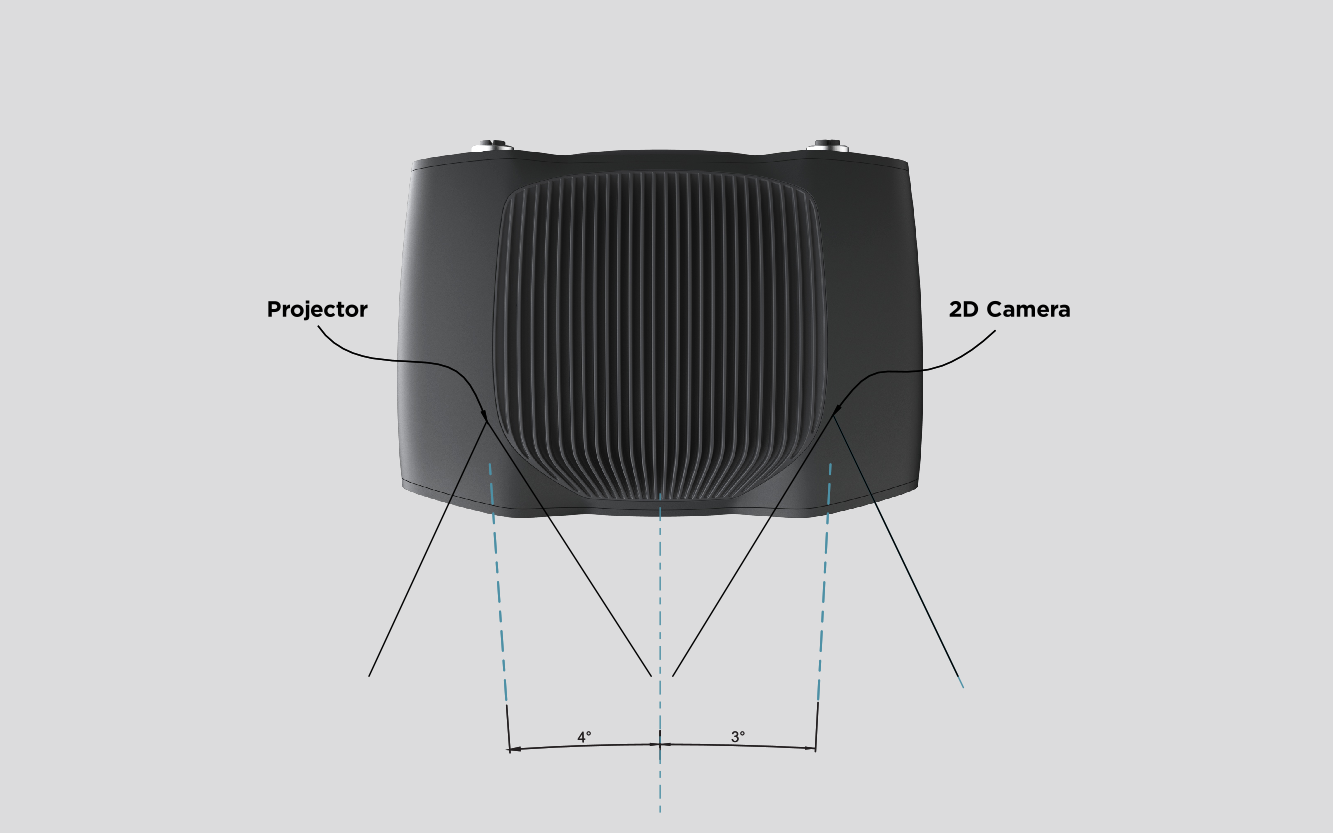

The 2D camera and the projector have an angle with respect to the center axis. This should be considered if it is desired to have the camera perpendicular to the scene.

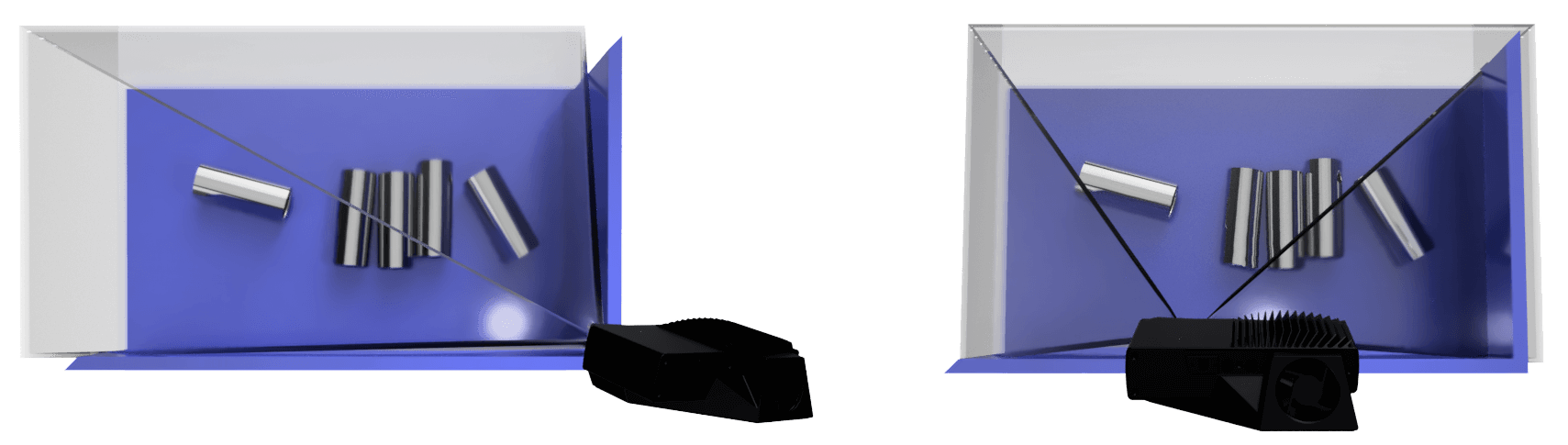

In bin-picking applications

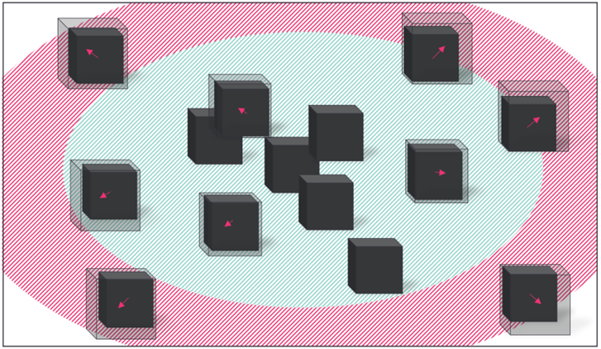

In presence of strong interreflections from the bin walls, you can place the Zivid camera projector above the back edge or above the rear corner of the bin (see images below). Pan and tilt it so that the 2D camera is looking at the center of the bin. The projector rays should not fall on the inner surfaces of the two walls closest to the projector; they should almost be parallel to those two walls.

Mounting the camera this way minimizes interreflections from the bin walls, and also frees up space above the scene for easier access for tools and robots.

In strong ambient lighting conditions, direct reflections from the light source may create unwanted highlights in the 2D image. To minimize these highlights, try slightly moving or tilting the camera.

Optimize distance for high-accuracy requirements

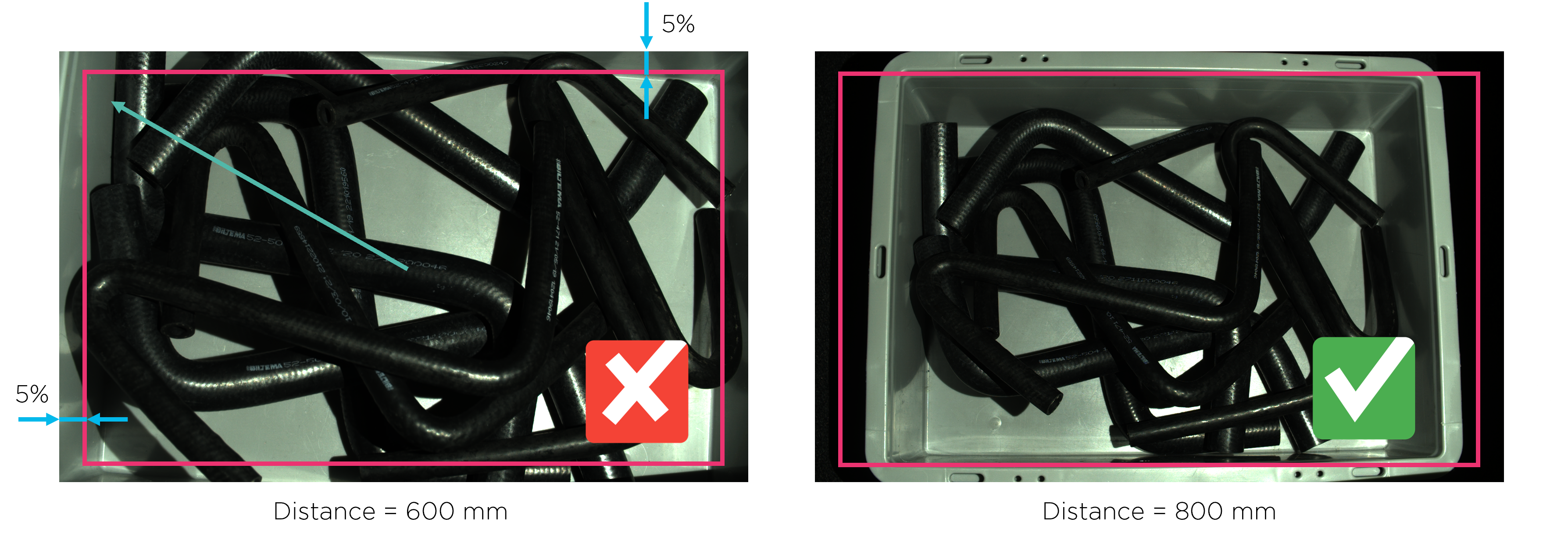

In general, it is always recommended to put the camera as close to the scene as possible. This is because the accuracy is better at shorter imaging distances. However, the accuracy is also best in the center of the image and decreases slightly towards the corners, like in the image below.

Therefore, we recommend installing the camera at a distance slightly larger than the minimum to avoid using 5% of the image from its edges. For most applications, this will happen naturally due to the mounting safety margins.

Further reading

When we have found our working distance, it is time to find the right camera settings. We will cover this in Getting the Right Exposure for Good Point Clouds.