Downsampling Theory

Introduction

This article explains the theory on how to downsample a Zivid point cloud. If you are interested in the SDK implementation, go to Downsample article.

Why Not Keep Every Nth Pixel?

When downsampling an image, the goal is to reduce the size of the image while preserving as much of the quality as possible.

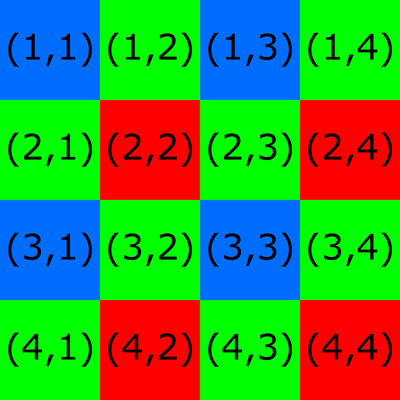

A common approach to downsampling is to keep every second, third, fourth, etc., pixel and discard the rest. However, this is not necessary ideal because of colors filters on the sensor. Take the Bayer filter mosaic as an example. The Bayer filter mosaic grid for a 4x4 image is shown below.

Note

Zivid point clouds coordinate system start with (0,0).

With this filter, each pixel on the camera corresponds to one color filter on the mosaic.

Consider a downsampling algorithm that keeps every other pixel. All kept pixels would correspond to the same color filter:

(1,1) (1,3) (3,1) (3,3) → Blue

(2,1) (2,3) (4,1) (4,3) → Green

(1,2) (1,4) (3,2) (3,4) → Green

(2,2) (2,4) (4,2) (4,4) → Red

Recommended Downsampling Procedure

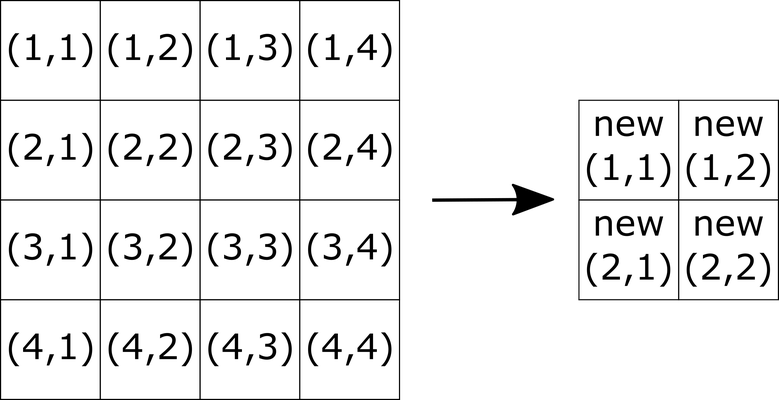

To preserve data quality, you should consider all pixels and perform downsampling on an even pixel grid (2x2, 4x4, 6x6, etc.). Here is a recommended procedure to reduce the image size by half, e.g., from 4x4 to 2x2.

Downsampling RGB values (color image)

Calculate each pixel value of the new image by averaging every 2x2 pixel grid of the initial image for each channel R, G, and B. For example, to calculate the new R values:

Repeat the same process for G and B values.

Downsampling XYZ values (point cloud)

For point cloud data, we also need to handle NaN values. As with R, G, B color values, X, Y, Z pixel values of the new image should be calculated by taking every 2x2 pixel grid of the initial image. Instead of a normal average, use an SNR-weighted average value for each coordinate. To brush up on how Zivid uses SNR check out our SNR page.

There are cases where the X, Y, Z coordinates of any pixel may have a NaN value, but the SNR for that pixel will not be NaN. We can handle this by doing a basic check to see if any pixel has a NaN value for one of its coordinates. Check if any pixel has a NaN value for one of the X, Y, Z coordinates. If it does, then replace the SNR value for that pixel with zero. This can be done by selecting the pixels whose e.g. Z coordinates are NaNs and setting their SNR value to zero:

where

The next step is calculating the sum of SNR values for every 2x2 pixel grid:

After this, calculate the weight for each pixel of the initial image:

To avoid having to deal with

Finally, the X, Y, Z coordinate values can be calculated. Here is an example for calculating the new X:

The same should be done for Y and Z values.